diff --git a/README-CN.md b/README-CN.md

index ca22f4f..2954456 100644

--- a/README-CN.md

+++ b/README-CN.md

@@ -16,8 +16,7 @@

🤩 **Easy & Awesome** 仅需下载或新训练合成器(synthesizer)就有良好效果,复用预训练的编码器/声码器,或实时的HiFi-GAN作为vocoder

-## 快速开始

-> 0训练新手友好版可以参考 [Quick Start (Newbie)](https://github.com/babysor/Realtime-Voice-Clone-Chinese/wiki/Quick-Start-(Newbie))

+🌍 **Webserver Ready** 可伺服你的训练结果,供远程调用

### 1. 安装要求

> 按照原始存储库测试您是否已准备好所有环境。

@@ -27,48 +26,58 @@

> 如果在用 pip 方式安装的时候出现 `ERROR: Could not find a version that satisfies the requirement torch==1.9.0+cu102 (from versions: 0.1.2, 0.1.2.post1, 0.1.2.post2)` 这个错误可能是 python 版本过低,3.9 可以安装成功

* 安装 [ffmpeg](https://ffmpeg.org/download.html#get-packages)。

* 运行`pip install -r requirements.txt` 来安装剩余的必要包。

-* 安装 webrtcvad 用 `pip install webrtcvad-wheels`。

+* 安装 webrtcvad `pip install webrtcvad-wheels`。

-### 2. 使用数据集训练合成器

+### 2. 准备预训练模型

+考虑训练您自己专属的模型或者下载社区他人训练好的模型:

+#### 2.1 使用数据集自己训练合成器模型(与2.2二选一)

* 下载 数据集并解压:确保您可以访问 *train* 文件夹中的所有音频文件(如.wav)

* 进行音频和梅尔频谱图预处理:

`python pre.py <datasets_root>`

可以传入参数 --dataset `{dataset}` 支持 aidatatang_200zh, magicdata, aishell3

> 假如你下载的 `aidatatang_200zh`文件放在D盘,`train`文件路径为 `D:\data\aidatatang_200zh\corpus\train` , 你的`datasets_root`就是 `D:\data\`

->假如發生 `頁面文件太小,無法完成操作`,請參考這篇[文章](https://blog.csdn.net/qq_17755303/article/details/112564030),將虛擬內存更改為100G(102400),例如:档案放置D槽就更改D槽的虚拟内存

-

* 训练合成器:

`python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

-* 当您在训练文件夹 *synthesizer/saved_models/* 中看到注意线显示和损失满足您的需要时,请转到下一步。

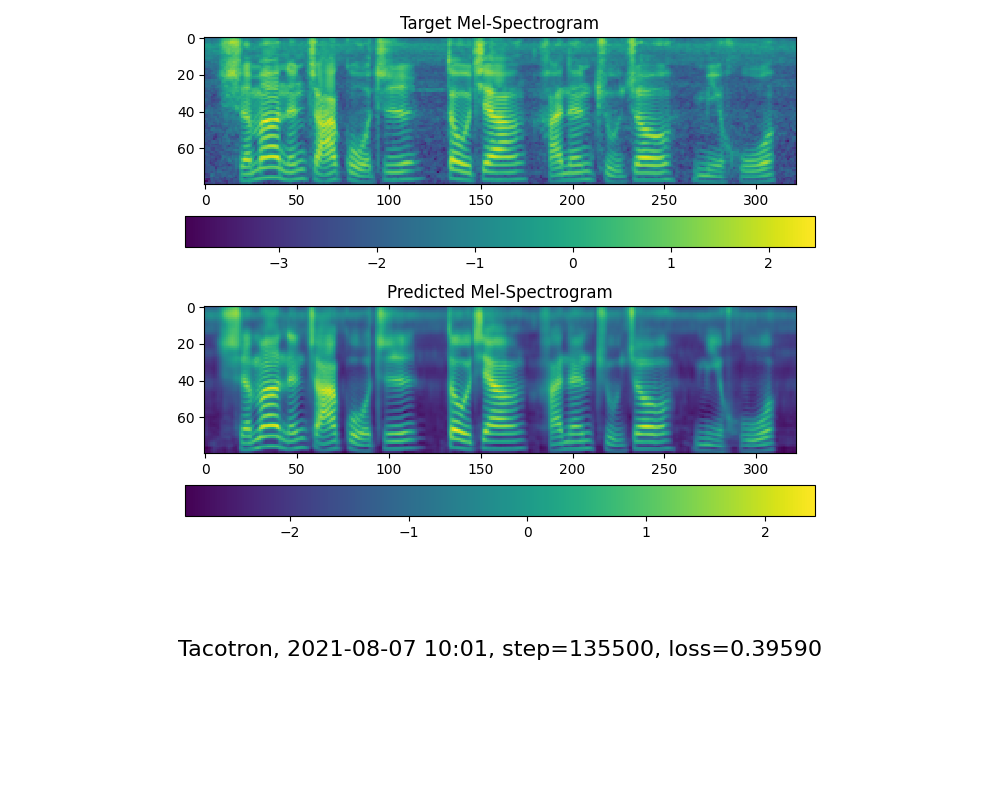

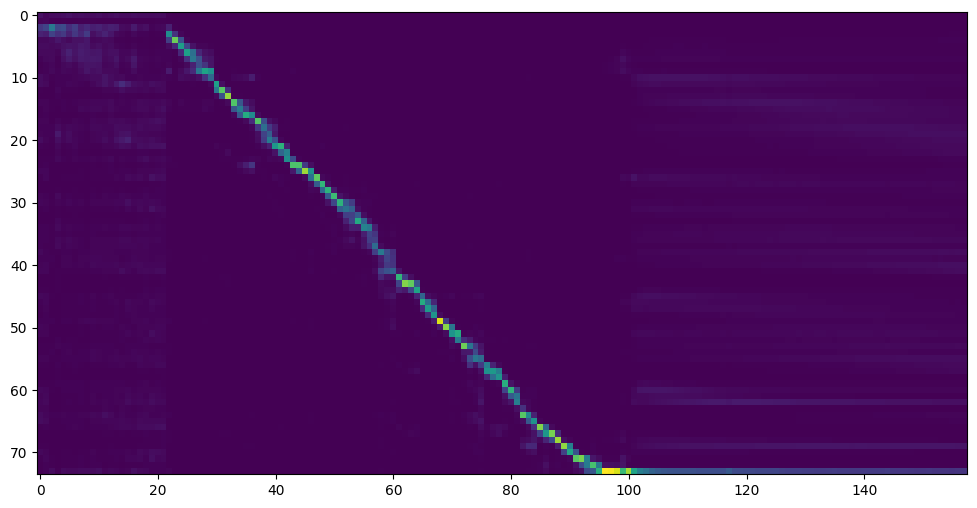

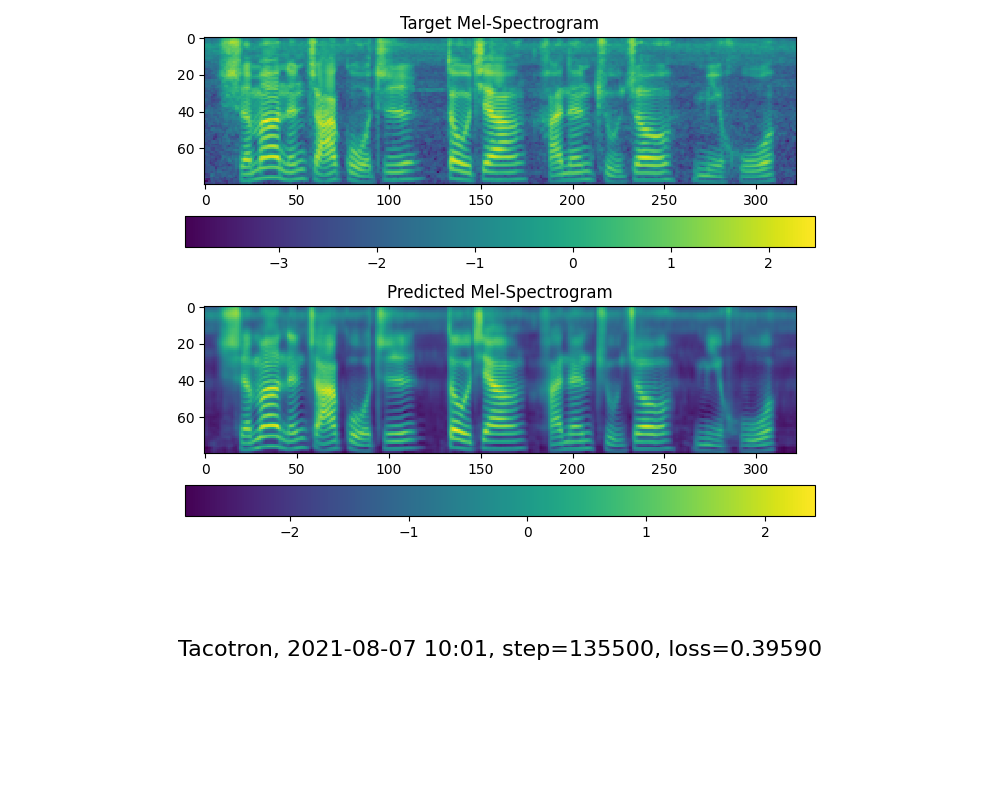

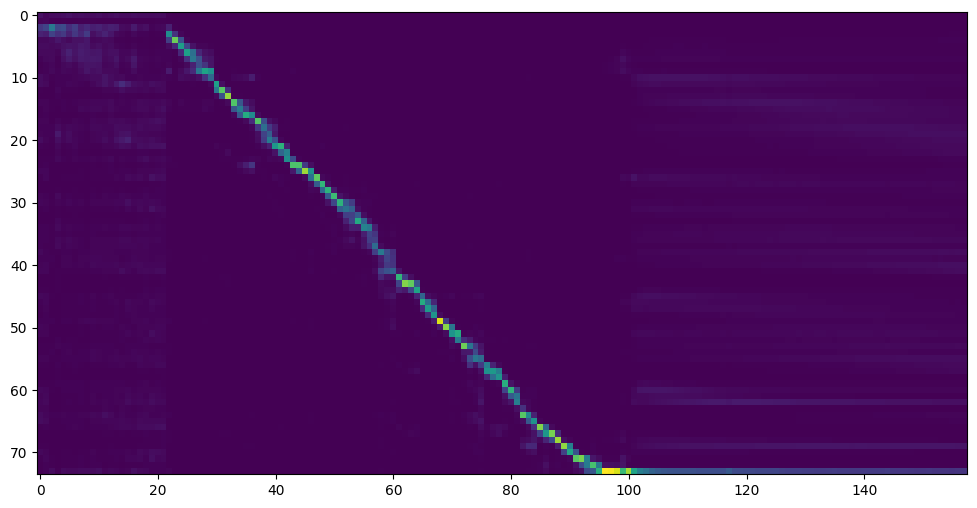

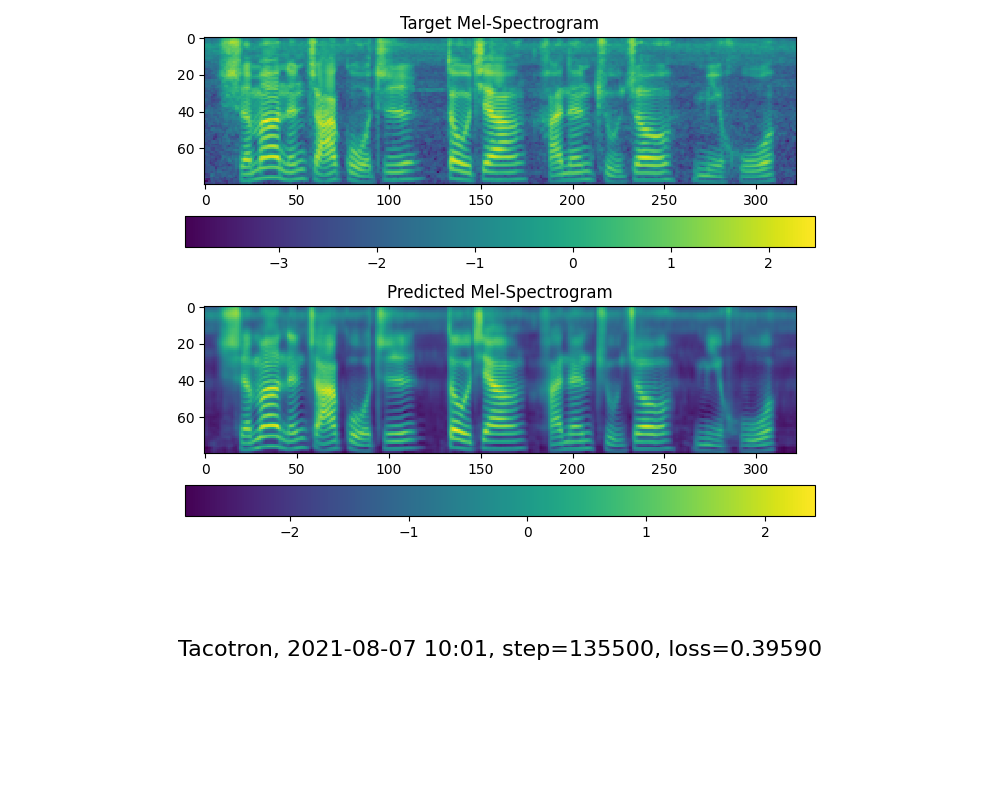

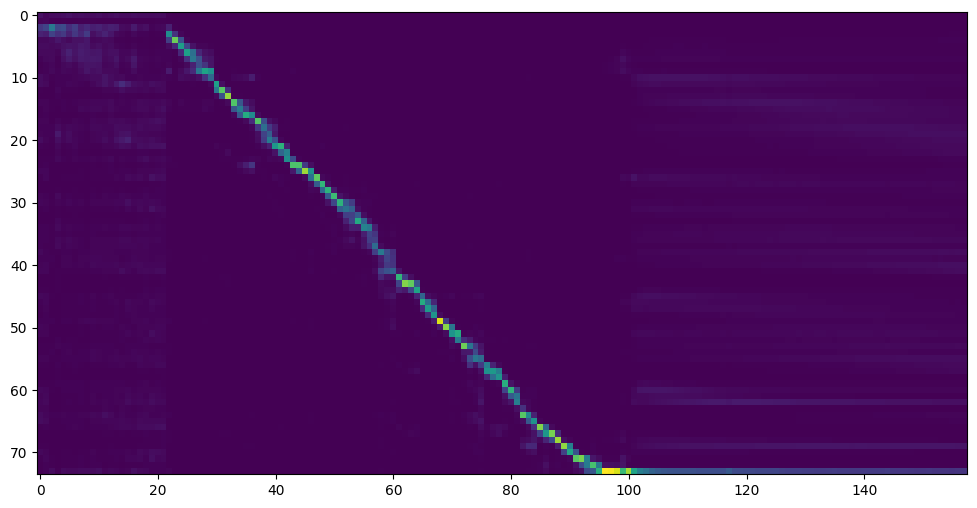

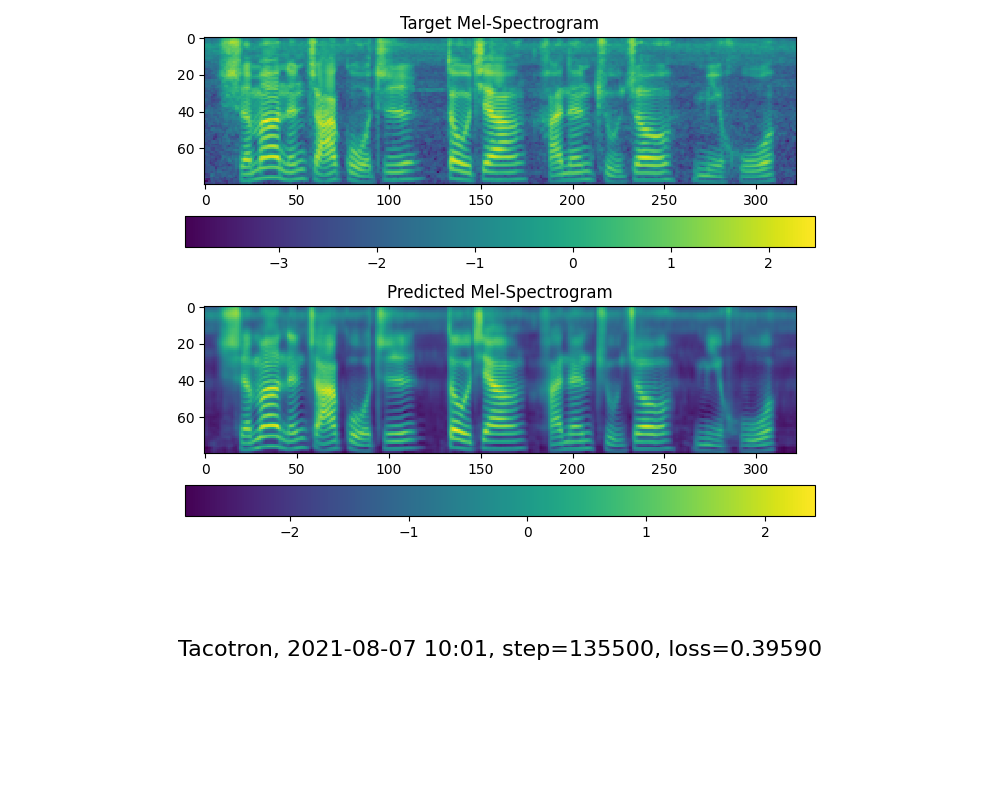

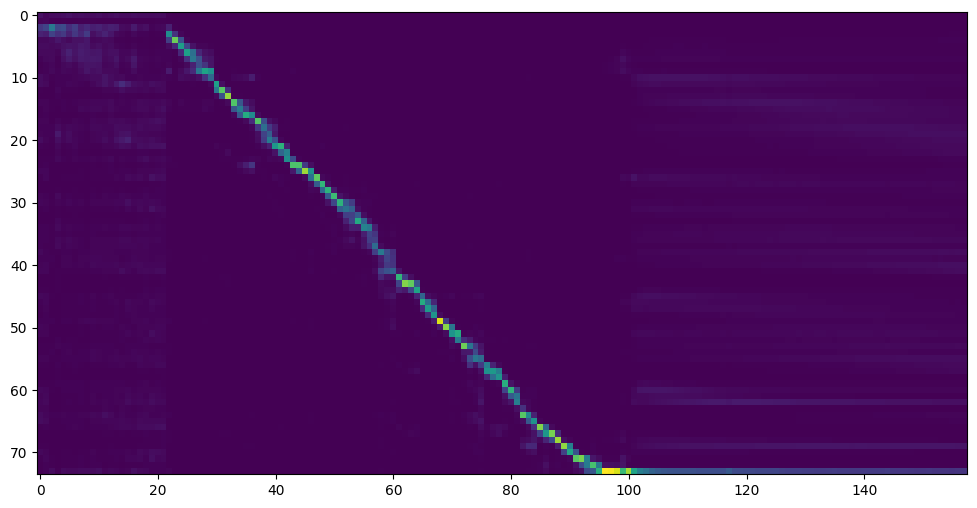

-> 仅供参考,我的注意力是在 18k 步之后出现的,并且在 50k 步之后损失变得低于 0.4

-

-

+* 当您在训练文件夹 *synthesizer/saved_models/* 中看到注意线显示和损失满足您的需要时,请转到`启动程序`一步。

-### 2.2 使用预先训练好的合成器

-> 实在没有设备或者不想慢慢调试,可以使用网友贡献的模型(欢迎持续分享):

+#### 2.2使用社区预先训练好的合成器(与2.1二选一)

+> 当实在没有设备或者不想慢慢调试,可以使用社区贡献的模型(欢迎持续分享):

| 作者 | 下载链接 | 效果预览 | 信息 |

| --- | ----------- | ----- | ----- |

|@FawenYo | https://drive.google.com/file/d/1H-YGOUHpmqKxJ9FRc6vAjPuqQki24UbC/view?usp=sharing [百度盘链接](https://pan.baidu.com/s/1vSYXO4wsLyjnF3Unl-Xoxg) 提取码:1024 | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps 台湾口音

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ 提取码:2021 | https://www.bilibili.com/video/BV1uh411B7AD/ | 150k steps 旧版需根据[issue](https://github.com/babysor/MockingBird/issues/37)修复

-### 2.3 训练声码器 (Optional)

+#### 2.3训练声码器 (可选)

+对效果影响不大,已经预置3款,如果希望自己训练可以参考以下命令。

* 预处理数据:

`python vocoder_preprocess.py <datasets_root>`

* 训练wavernn声码器:

-`python vocoder_train.py mandarin <datasets_root>`

+`python vocoder_train.py <trainid> <datasets_root>`

+> `<trainid>`替换为你想要的标识,同一标识再次训练时会延续原模型

* 训练hifigan声码器:

-`python vocoder_train.py mandarin <datasets_root> hifigan`

-

-### 3. 启动工具箱

-然后您可以尝试使用工具箱:

+`python vocoder_train.py <trainid> <datasets_root> hifigan`

+> `<trainid>`替换为你想要的标识,同一标识再次训练时会延续原模型

+

+### 3. 启动程序或工具箱

+您可以尝试使用以下命令:

+

+### 3.1 启动Web程序:

+`python web.py`

+运行成功后在浏览器打开地址, 默认为 `http://localhost:8080`

+> 注:目前界面比较buggy,

+> * 第一次点击`录制`要等待几秒浏览器正常启动录音,否则会有重音

+> * 录制结束不要再点`录制`而是`停止`

+> * 仅支持手动新录音(16khz), 不支持超过4MB的录音,最佳长度在5~15秒

+> * 默认使用第一个找到的模型,有动手能力的可以看代码修改 `web\__init__.py`。

+

+### 3.2 启动工具箱:

`python demo_toolbox.py -d <datasets_root>`

-

-> Good news🤩: 可直接使用中文

+> 请指定一个可用的数据集文件路径,如果有支持的数据集则会自动加载供调试,也同时会作为手动录制音频的存储目录。

## Release Note

2021.9.8 新增Hifi-GAN Vocoder支持

@@ -148,4 +157,12 @@ voc_pad =2

請參照 issue [#37](https://github.com/babysor/MockingBird/issues/37)

#### 5.如何改善CPU、GPU佔用率?

-適情況調整batch_size參數來改善

\ No newline at end of file

+適情況調整batch_size參數來改善

+

+#### 6.發生 `頁面文件太小,無法完成操作`

+請參考這篇[文章](https://blog.csdn.net/qq_17755303/article/details/112564030),將虛擬內存更改為100G(102400),例如:档案放置D槽就更改D槽的虚拟内存

+

+#### 7.什么时候算训练完成?

+首先一定要出现注意力模型,其次是loss足够低,取决于硬件设备和数据集。拿本人的供参考,我的注意力是在 18k 步之后出现的,并且在 50k 步之后损失变得低于 0.4

+

+

\ No newline at end of file

diff --git a/README.md b/README.md

index 287bba0..27a6cec 100644

--- a/README.md

+++ b/README.md

@@ -14,6 +14,7 @@

🤩 **Easy & Awesome** effect with only newly-trained synthesizer, by reusing the pretrained encoder/vocoder

+🌍 **Webserver Ready** to serve your result with remote calling

### [DEMO VIDEO](https://www.bilibili.com/video/BV1sA411P7wM/)

@@ -29,24 +30,20 @@

* Run `pip install -r requirements.txt` to install the remaining necessary packages.

* Install webrtcvad `pip install webrtcvad-wheels`(If you need)

> Note that we are using the pretrained encoder/vocoder but synthesizer, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

-### 2. Train synthesizer with your dataset

-* Download aidatatang_200zh or other dataset and unzip: make sure you can access all .wav in *train* folder

+### 2. Prepare your models

+You can either train your models or use existing ones:

+#### 2.1. Train synthesizer with your dataset

+* Download dataset and unzip: make sure you can access all .wav in folder

* Preprocess with the audios and the mel spectrograms:

`python pre.py <datasets_root>`

-Allow parameter `--dataset {dataset}` to support aidatatang_200zh, magicdata, aishell3

-

->If it happens `the page file is too small to complete the operation`, please refer to this [video](https://www.youtube.com/watch?v=Oh6dga-Oy10&ab_channel=CodeProf) and change the virtual memory to 100G (102400), for example : When the file is placed in the D disk, the virtual memory of the D disk is changed.

-

+Allowing parameter `--dataset {dataset}` to support aidatatang_200zh, magicdata, aishell3, etc.

* Train the synthesizer:

`python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

* Go to next step when you see attention line show and loss meet your need in training folder *synthesizer/saved_models/*.

-> FYI, my attention came after 18k steps and loss became lower than 0.4 after 50k steps.

-

-

-### 2.2 Use pretrained model of synthesizer

+#### 2.2 Use pretrained model of synthesizer

> Thanks to the community, some models will be shared:

| author | Download link | Preview Video | Info |

@@ -54,10 +51,8 @@ Allow parameter `--dataset {dataset}` to support aidatatang_200zh, magicdata, ai

|@FawenYo | https://drive.google.com/file/d/1H-YGOUHpmqKxJ9FRc6vAjPuqQki24UbC/view?usp=sharing [Baidu Pan](https://pan.baidu.com/s/1vSYXO4wsLyjnF3Unl-Xoxg) Code:1024 | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps with local accent of Taiwan

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ code:2021 | https://www.bilibili.com/video/BV1uh411B7AD/

-> A link to my early trained model: [Baidu Yun](https://pan.baidu.com/s/10t3XycWiNIg5dN5E_bMORQ)

-Code:aid4

-

-### 2.3 Train vocoder (Optional)

+#### 2.3 Train vocoder (Optional)

+> note: vocoder has little difference in effect, so you may not need to train a new one.

* Preprocess the data:

`python vocoder_preprocess.py <datasets_root>`

@@ -67,15 +62,13 @@ Code:aid4

* Train the hifigan vocoder

`python vocoder_train.py mandarin <datasets_root> hifigan`

-### 3. Launch the Toolbox

-You can then try the toolbox:

+### 3. Launch

+#### 3.1 Using the web server

+You can then try to run:`python web.py` and open it in browser, default as `http://localhost:8080`

+#### 3.2 Using the Toolbox

+You can then try the toolbox:

`python demo_toolbox.py -d <datasets_root>`

-or

-`python demo_toolbox.py`

-

-> Good news🤩: Chinese Characters are supported

-

## Reference

> This repository is forked from [Real-Time-Voice-Cloning](https://github.com/CorentinJ/Real-Time-Voice-Cloning) which only support English.

@@ -152,4 +145,13 @@ voc_pad =2

Please refer to issue [#37](https://github.com/babysor/MockingBird/issues/37)

#### 5. How to improve CPU and GPU occupancy rate?

-Adjust the batch_size as appropriate to improve

\ No newline at end of file

+Adjust the batch_size as appropriate to improve

+

+

+#### 6. What if it happens `the page file is too small to complete the operation`

+Please refer to this [video](https://www.youtube.com/watch?v=Oh6dga-Oy10&ab_channel=CodeProf) and change the virtual memory to 100G (102400), for example : When the file is placed in the D disk, the virtual memory of the D disk is changed.

+

+#### 7. When should I stop during training?

+FYI, my attention came after 18k steps and loss became lower than 0.4 after 50k steps.

+

+